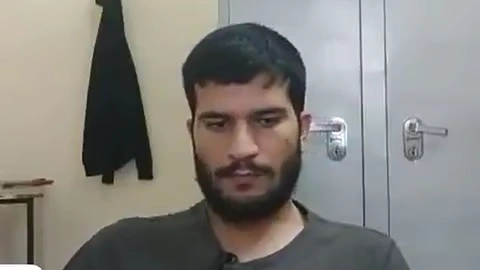

Indian media outlets have come under scrutiny for broadcasting a so-called video of a suspect linked to the November 10 Delhi blast, which forensic analysts confirm is fake and AI-dubbed. The video, which allegedly showed the individual praising suicide attacks, has raised serious concerns over deliberate misinformation and propaganda.

A week after a devastating blast near Delhi’s iconic Red Fort claimed 13 lives, a self-recorded and undated video of the bomber Dr Umar Mohammad alias Umar-un-Nabi has surfaced. This arguably offers the first glimpse into the mind of the Delhi bomber, as it shows him discussing… pic.twitter.com/74UZWlKHYH

— Netram Defence Review (@NetramDefence) November 18, 2025

Experts analyzing the footage noted inconsistencies in lip movements, body posture, and mismatched video fragments, indicating clear manipulation. Observers questioned the logic behind the video’s narrative: why would a suspect voluntarily record incriminating material before committing an attack, effectively leaving evidence against themselves?

The doctored video reportedly depicts the suspect praising suicide attacks, despite Islamic law strictly prohibiting such acts, further highlighting the absurdity of the claim. Analysts warn that the footage appears to be part of a broader effort to stigmatize Kashmiris, portray ordinary resistance as terrorism, and justify state crackdowns in Indian-administered Kashmir.

Forensic experts have cast doubt on the video’s authenticity, noting the absence of independent verification or credible sourcing. The incident echoes a recurring pattern of Indian media outlets producing manipulated or exaggerated content to advance narratives aligned with the ruling Bharatiya Janata Party (BJP) and Hindutva ideology.

The Indian Ministry of Information & Broadcasting (MIB) has previously issued advisories urging broadcasters to avoid airing violent or sensitive content without verification, underscoring the concerns raised by this latest video. Observers note that AI-manipulated content represents a new front in information warfare, where media platforms are used to shape public perception rather than report verified facts.

Experts and human rights advocates emphasize the necessity of thorough forensic verification before treating such footage as evidence, cautioning that ignoring this step could legitimize misinformation and fuel Islamophobic narratives.

The incident underscores the increasing use of AI in media manipulation, reinforcing calls for critical scrutiny and responsible reporting, particularly in matters involving security and communal sensitivities.